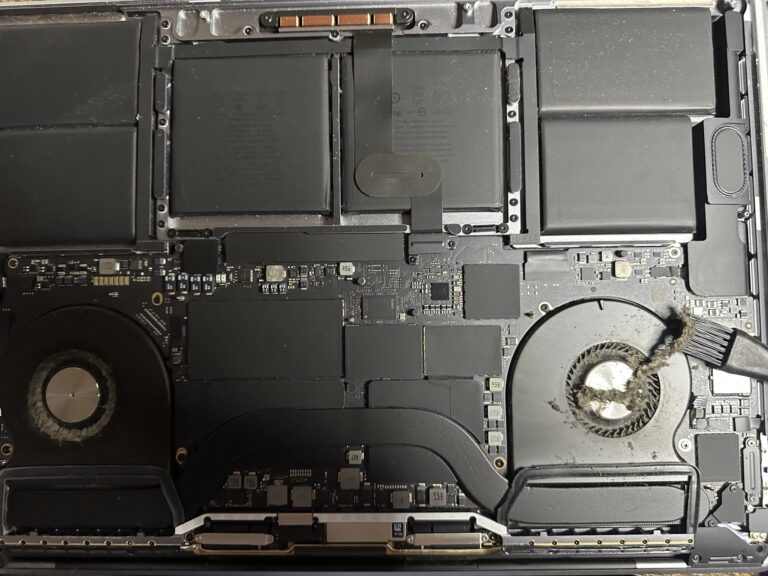

If you’ve been following my journey, you might know that I recently bought an M2 Max MacBook Pro. However, I also own a 2019 Intel MacBook Pro, which started to slow down significantly in recent days. Thankfully, I was able [Read More…]

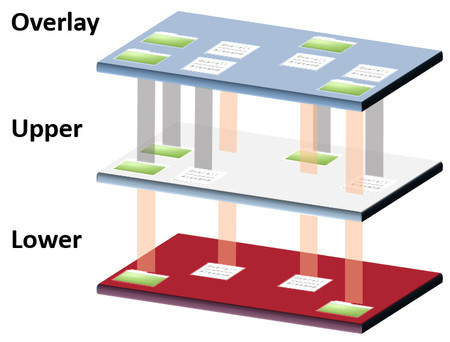

Attending AWSome day online conference 2019

The AWSome day was a free online Conference and a training event sponsor by Intel that will provide a step-by-step introduction to the core AWS (Amazon Web Services) services. Its free and everyone can attend. It was scheduled on 26 [Read More…]