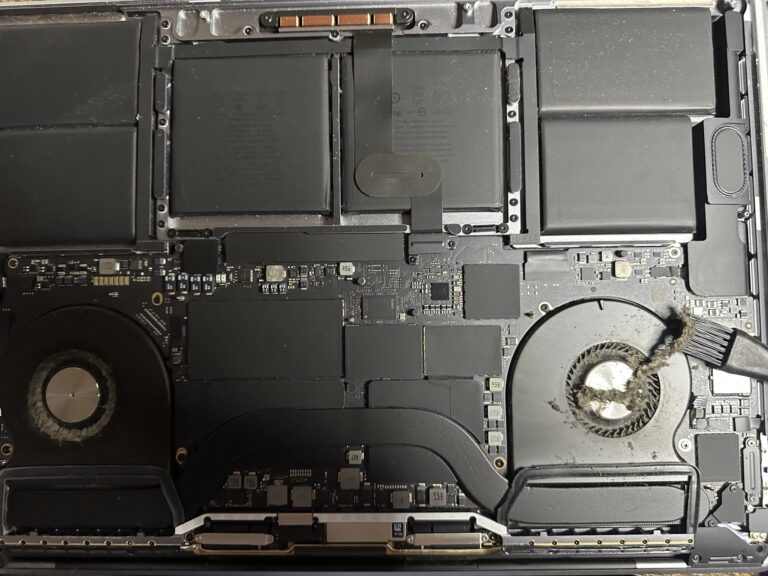

If you’ve been following my journey, you might know that I recently bought an M2 Max MacBook Pro. However, I also own a 2019 Intel MacBook Pro, which started to slow down significantly in recent days. Thankfully, I was able [Read More…]

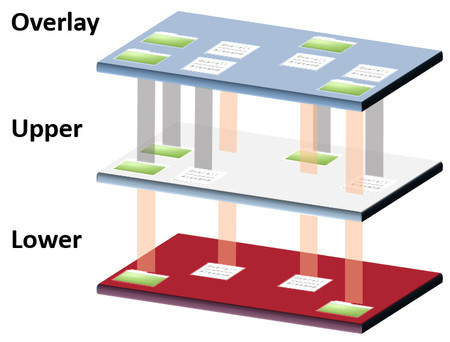

MariaDB-10.1 Galera Cluster on CentOS 7

Some times back, i posted two articles on MariaDB Master-Master replication and MariaDB Master-Slave replication. Well, after several requests from friends, i was asked to blog on MariaDB Galera Cluster. MariaDB Galera Cluster is a synchronous multi-master cluster for MariaDB. [Read More…]