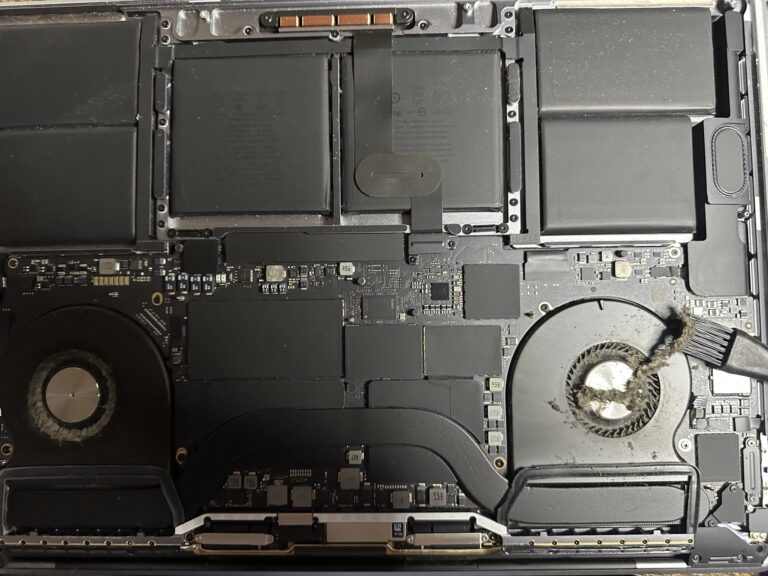

If you’ve been following my journey, you might know that I recently bought an M2 Max MacBook Pro. However, I also own a 2019 Intel MacBook Pro, which started to slow down significantly in recent days. Thankfully, I was able [Read More…]

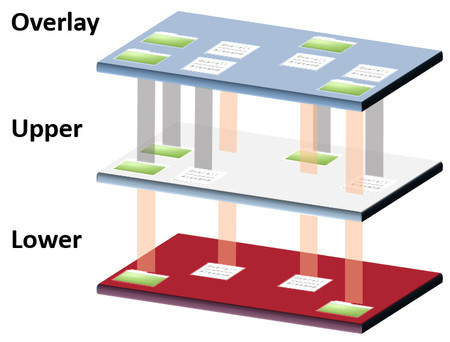

Securing MySQL traffic with Stunnel in a jail environment on CentOS

Stunnel is a program by Michal Trojnara that allows you to encrypt arbitrary TCP connections inside SSL. Stunnel can also allow you to secure non-SSL aware daemons and protocols (like POP, IMAP, LDAP, etc) by having Stunnel provide the encryption, [Read More…]