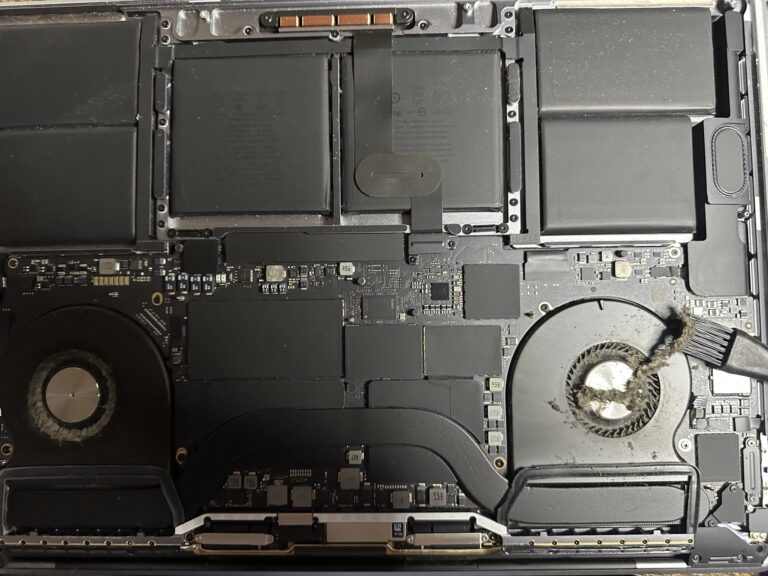

If you’ve been following my journey, you might know that I recently bought an M2 Max MacBook Pro. However, I also own a 2019 Intel MacBook Pro, which started to slow down significantly in recent days. Thankfully, I was able [Read More…]

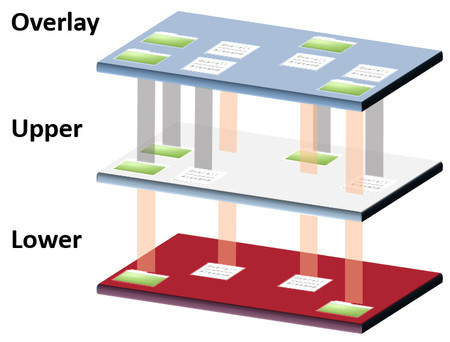

Components of VMware vSphere 6.0 – part 2

As mentioned previously in my previous post on Components of VMware and vSphere 6.0 – Part 1, the aim of the article is to publish a continuous summary of the Data Center Virtualization exam. This article will focuss on the [Read More…]